The debate over social work examinations created by the Association of Social Work Boards (ASWB) has been hampered by the lack of openly available methodology and data checked by neutral third parties. As a result, test-takers, researchers, employers, and social work boards are left with little information on which to gauge the quality of social work licensing exams.

When ASWB describes their methodology, they merely list DIF (Differential Item Functioning) and leave it at that. DIF is a choice to examine for bias at the item-level, but it does not provide any real information on what approach to DIF ASWB uses. Do they use a 2PL or 3PL model? How large is their sample size? Do they purify items with DIF when estimating a test-taker’s overall ability? There are many right answers to each of these questions, and providing any information on the process used by ASWB would help social workers and boards judge the strengths and limitations of their approach.

ASWB would tell you to watch their psychometrics webinar!

I was a part of that webinar call, along with my introductory research methods students. They did not appreciate the perfunctory review of validity and reliability (complete with target diagrams!); nor did they appreciate the study from 2004 in which the developers of the ACT college entrance exam actually provided data to researchers to examine differential test functioning (i.e., whether the test is biased).

ASWB also explained statistical significance does not actually mean large effect sizes in the real world…which really just reinforced everything I taught them about p-values vs. effect sizes in every statistical test, not just the ones used to validate exams…so thanks for the review?

Students did appreciate that their instructor asked unanswered questions in the chat to social work regulators like, “why can’t we repeat this exam bias study you cited with ASWB test data?”, “what is your sample size for DIF analysis,” and “what is the slope of the test information curve at the cut score, and why don’t you measure for that?” I guess we never had time for those questions.

I’ll save you the fifty minutes of video, with the one screenshot that actually lists any information about the psychometric properties of the ASWB examinations and the procedures used to measure them. That’s it. The rest of the video is psychometrics 101 and a DTF study from 2004 that ASWB refuses to replicate with their own exam data.

Hey is Cronbach’s alpha (that squiggly a = .85-.91) a good estimate of exam reliability? Nope! Let’s look at a recent OER textbook chapter on Item Response Theory by Jerry Bean:

IRT approaches the concept of scale reliability differently than the traditional classical test theory approach using coefficient alpha or omega. The CTT approach assumes that reliability is based on a single value that applies to all scale scores.

ASWB does not assess the test information curve or conditional standard errors. Instead, they rely on outdated methods and assumptions.

Just ask the state boards! They judge whether the exam is good enough for their state!

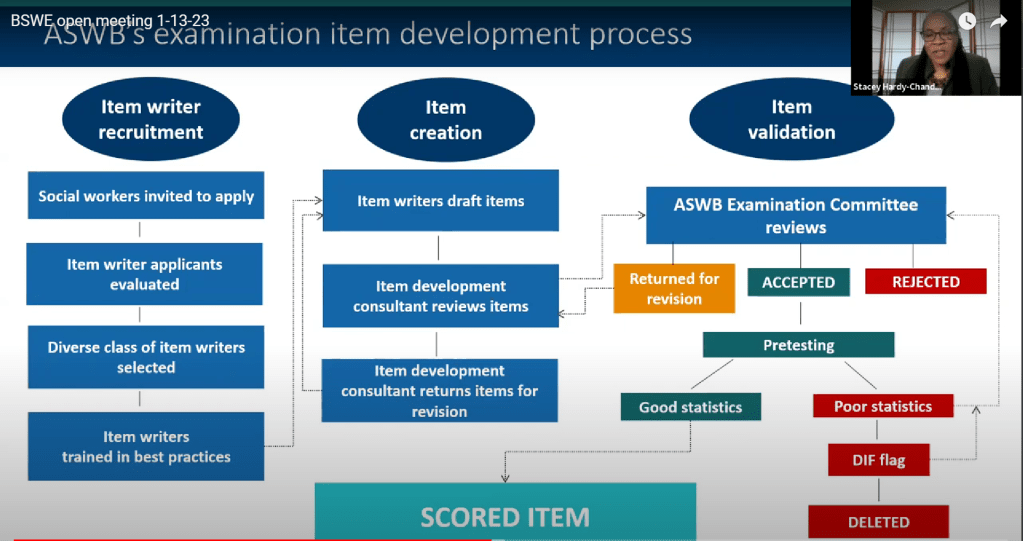

You’d think state boards would get more information, but that’s not true either. #StopASWB organizers have watched every public meeting of ASWB’s Nationwide Gaslighting Tour, submitted FOIAs, and written a lot of emails. All we can get is general information like the chart below, assuring state boards that the examinations are perfectly fine.

Once I watched enough of these presentations, I noticed a problem.

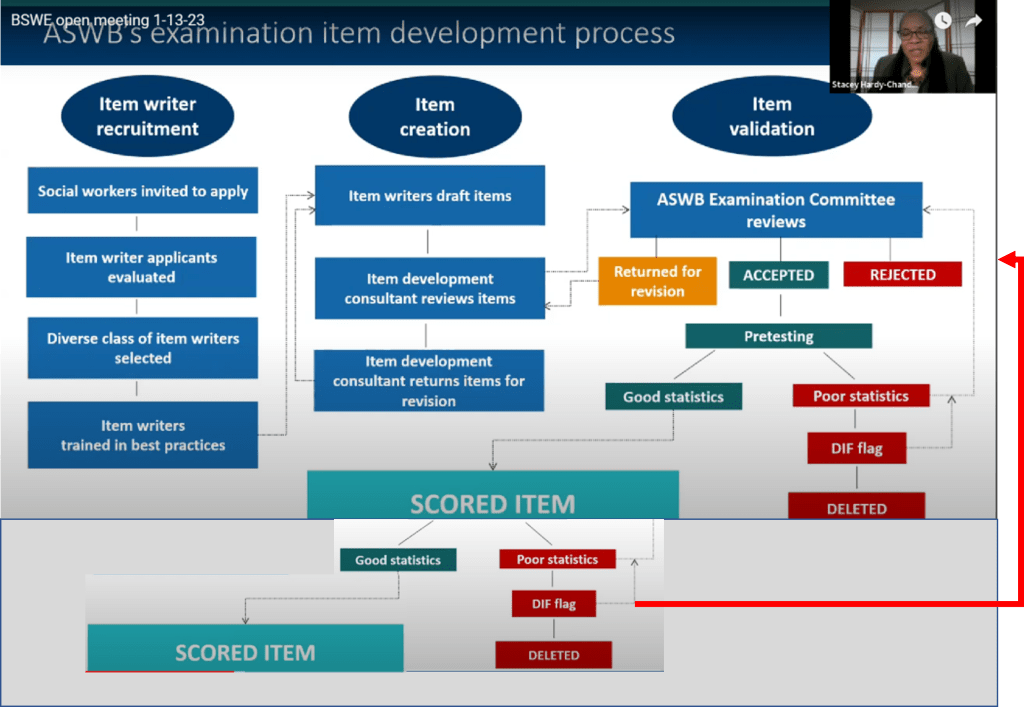

According to this diagram, differential item functioning analysis is performed on unscored items that do not impact pass/fail. Yet, you will note in this video (and in other public statements I will note later) ASWB and Dr. Hardy-Chandler claim to continue monitoring for bias (Differential Item Functioning) even after an item becomes scored.

ASWB never makes information about deleted, scored items public knowledge–let alone, tell the social work boards who rejected qualified applicants based on exam items removed by ASWB for biased functioning.

As you can hear in her testimony, ASWB tests for biased items even after they are part of the scored item pool.

For those who would like to skip to the important part:

“Items only make it to the scored portion of the exam if they show good statistics, meaning they function consistently across self-reported groups and that’s monitored on an ongoing basis. And then even when an item makes it to the scored portion, the 150 of the exam, monitoring of its performance continues [emphasis added].”

Dr. Stacey Hardy-Chandler, Maryland Board of Social Work Open Meeting 1/13/2023 (Minute 28:00)

This accords with similar statements that ASWB has made on monitoring the performance of scores. In volume 20 (2020) of Social Work Today, Lavina Harless of ASWB stated that

“Monitoring of item performance doesn’t end once an item moves out of pretest status. Scored items are continually monitored to ensure that performance doesn’t slip. If a scored item demonstrates a statistically significant drop in performance, it is taken out of use and returned to the examination committee for review. Should the committee decide to edit and keep the item, it returns to pretest status.”

In the September 2021 edition of the New Social Worker, Stacey Owens of ASWB stated that

“Scored items are continually monitored for DIF. On an annual basis, less than 5% of all items released show DIF. Items flagged for DIF are removed from the bank of potential exam questions” (para. 3)

Yet, in their webinar, ASWB insists that removed items never impact scores. How is this possible?

ASWB is lying to public. That is all I can say for sure. Prior to 2023, they publicly stated that scored items are removed; yet, in their most recent statements, ASWB denied removing scored items because of biased functioning.

It’s certainly not on their diagram on how the exam is validated and tested. Indeed, you could see where they chopped that entire process off from the diagram they shared with the board. Weird…

Think about the ethical implications of that hidden data and procedure. ASWB monitors live, scored exam items and removes them from the exam pool without informing test-takers who failed because of them (or the social work boards who denied their license). When they present to social work boards, they only allude to continuous monitoring without providing any information on items that have been removed due to biased functioning.

How often are scored items removed due to biased functioning?

The most recent statistics ASWB reported were in the September 2021 edition of the New Social Worker, in which Stacey Owens of ASWB stated

“Scored items are continually monitored for DIF. On an annual basis, less than 5% of all items released show DIF. Items flagged for DIF are removed from the bank of potential exam questions” (para. 3)

So, that is about 5% of the total exam pool that gets eliminated every year due to differential item functioning. Ideally, most of those items would be pretest items that never impact test-taker scores.

However, it is likely that some of those removed items were scored items. Thus, biased items are a part of those 150 questions and determined if an examinee failed by 1 or 2 points. A test-taker’s 175-item test, pulled at random from ASWB’s test bank with 5% of all items show DIF, should also have 5% of items showing DIF. How many items is that?

That means approximately 8.75 exam items out of every 175 given to social workers on every test they take right now as a condition for licensure will be flagged for biased functioning. ASWB says “<5% of items,” so let’s round down to 8 items are flagged for differential item functioning on every exam that social work boards require for licensure.

Let’s be generous and say that, on average, 6 of the 8 DIF-flagged items (75%) are in the pretesting section of the exam and had no impact on the test-taker’s score or licensure. Following this assumption would also mean that, on average, 6 of the 25 items approved provisionally by the examination committee for the average exam (nearly 25% of all pretest items on exams) displayed differential functioning. That’s not great, and we’ll put that in a little box as a little problem, and move on to the big one.

THAT MEANS 1 or 2 BIASED, SCORED ITEMS, ON AVERAGE, DETERMINE IF SOMEONE PASSES OR FAILS ASWB EXAMS!

HOW MANY TEST-TAKERS FAIL BY ONE OR TWO POINTS?! THOUSANDS!

Social work boards in every state and DC rely on ASWB’s assertion that their cut score is well-tuned. That decisions can be made based on one exam item alone–yet, according to ASWB’s public statements, it is likely that at least one or two items of EVERY EXAM SCORE SOCIAL WORK BOARDS USE TO MAKE LICENSING DECISIONS are going to be removed later, in secret, by ASWB due to differential item functioning.

ASWB has no publicly written procedure to inform the board who rejected them, the test-taker who was rejected, or the community that will now lose a qualified social worker–most likely, an aspiring clinician from oppressed and historically underrepresented groups in professional social work.

ASWB’s cut-scores are guesses (with massive consequences)

The previous section described how, based on ASWB’s publicly reported data, boards can conclude that ASWB’s cut scores are poorly tuned. Moreover, they should make ethical judgements about an examination provider that removes items from circulation that impact test-taker’s lives in deeply profound ways without informing anyone–state boards, test-takers, or other stakeholders.

Even if ASWB did not hide data from these parties, its cut scores are–put charitably–best guesses about the actual functioning of its examinations. Let’s take a look at how the test information curve, a measure of differential test functioning that ASWB refuses to perform, could be used to determine how well cut scores differentiate between test-takers of low, medium, or high ability.

If you are having trouble with the abstract concepts in the video, please watch for the examples related to math ability standardized tests as well as depression scales that follow right after.

Essentially, the “hump” of the test information curve tells us where a good cut score might be. A middling social worker–someone right on the line of competent vs. incompetent–that is what the test should be best at measuring.

ASWB does not measure the test information curve or ever investigate the psychometric functioning of their exam as a whole. Put simply, ASWB evaluates, item-by-item how well an item distinguishes between social workers of different abilities. It does not look for bias at the test-level. On the slide shown previously, it says they are “looking into” the use of differential test functioning approaches. They do not use them.

So, even if exam committee members were producing near-perfect exams with <1% of questions flagged for DIF, their cut scores–the things that determine whether social workers get licensed or not–are not determined by the actual psychometric properties of the exam. Instead, they are determined by the best guesses of subject matter experts. Actual real-world data from license-seeking test-takers is never used to establish or evaluate the exam’s cut-scores.